Reducing Support Load with an AI Helpdesk

A growing SaaS company was overwhelmed by repetitive support tickets: password resets, billing questions, and basic how‑to queries. Agents spent most of their day answering the same questions instead of helping customers with real problems.

Goobo Labs AI partnered with their support and product teams to design an AI helpdesk assistant that could answer common questions, triage complex issues, and learn from previous conversations without disrupting existing tools.

Overview

The client used a standard ticketing system, but workflows had grown organically. Macros and canned responses existed, yet agents still copy‑pasted between tools, and customers waited in queues for simple answers.

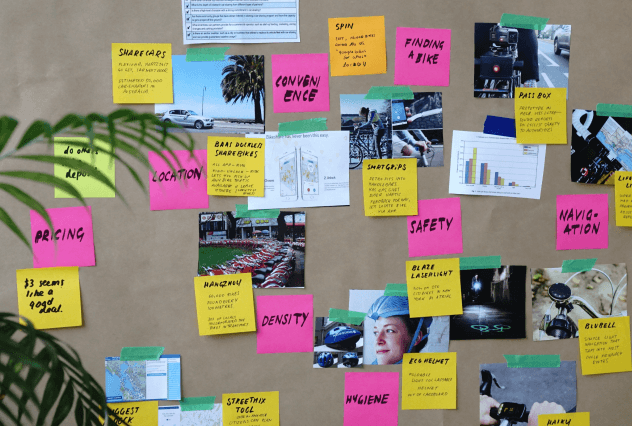

We started by analysing historical tickets to identify the most common topics and where customers were getting stuck. From there, we shaped a scope for the AI assistant: what it should answer autonomously, when to escalate, and how to keep humans firmly in control.

Solution

We trained an AI assistant on existing help centre content, past tickets, and product documentation, with guardrails to prevent it from inventing unsupported behaviour. The assistant was embedded into the client’s support portal and internal tools so it could serve both customers and agents.

When the assistant could not confidently resolve an issue, it collected structured context from the customer, tagged the ticket, and handed it to the right queue. Agents saw a pre‑filled summary, reducing time spent on triage and follow‑up questions.

Process

Ticket analysis

Cluster historical tickets to understand frequent topics, failure modes, and where automation would be safe.

Assistant design

Define the AI assistant’s responsibilities, escalation rules, and tone of voice aligned with the brand.

Pilot & tuning

Launch to a subset of customers and internal users, monitor conversations, and tune prompts and knowledge.

Rollout & training

Roll out more broadly, train agents on how to work with the assistant, and keep refining based on real usage.

Outcomes

Within weeks, the AI assistant was handling a large share of simple questions end‑to‑end, while providing better context on complex issues. First‑response times dropped, the backlog shrank, and agents could focus on higher‑value work such as debugging tricky edge cases and advising customers.